Pick A Database, Any Database

Alright, maybe not any database. Let’s stick with SQL Server. That’s the devil we know.

At some point in your life, you’re going to construct a query that takes user input, and that input is likely going to come in the form of a parameter.

It could be a stored procedure, dynamic SQL, or something from your application. But there it is.

Waiting. Watching.

Sniffing.

Defining A Problem

When we use parameters, we re-use execution plans, at least until a Qualifying Event™ occurs.

What’s a qualifying event?

- Recompile hint

- Stats update

- Temp table modification threshold

- Plan eviction

- Server restart

Now, it might be reasonable to think that a mature optimizer — and it is an optimizer, not just a planner — would be able to do something a bit more optimal. After all, why would anyone think it would take the same amount of work to get through 100 rows as it would take to get through 1,000,000 rows? It’s a fundamentally different approach.

Doing a run to the grocery store to replenish a few things requires a far different mindset from going to prepare for a large family meal. You have to choose between a basket or a cart, whether you can jump right to the couple spots you need or you need to walk up and down every aisle, and even if you might need to write down a list because it doesn’t fit into brain memory.

One might also have the expectation that if a significant inaccuracy is detected at runtime, the strategy might change. While that does sort of happen with Adaptive Joins, it’s not a full plan rewrite.

Detecting A Problem

The plan cache usually sucks for this, unless you’re saving the data off to more stable tables. Why? Because most people only figure out they’ve been sniffed after a plan changes, which means it’s not in the cache anymore. You know, when end users start complaining, the app goes unresponsive, you can’t connect to the server, etc.

You could set your watch to it.

But sometimes it’s there. Some funny looking little plan that looks quite innocent, but seems to do a lot of work when you bang it up against other DMVs.

If you have the luxury, Query Store is quite a better tool for detecting plan changes. It’s even got reports built in just for that.

For the extra fancy amongst you, I pray that your expensive monitoring tool has a way to tell you when query plans change, or when normally fast plans deviate from that.

Deciphering A Problem

This is where things can get difficult, unless you’re monitoring or logging information. You typically need a few different combinations of parameter values to feed in to your query, so you can see what changed and when. Quite often, there’s no going back easily.

Let’s say you had a plan, and it was a good plan. Then one of those pesky qualifying events comes along, and it’s decided that you need a new plan.

And what if… that new plan is worse? No matter how much you recompile or update stats or toggle with cardinality estimation, you just can’t go back to the way things were without lots of hints or changes to the query? Maybe that’s not parameter sniffing. Maybe that’s parameter snuffing. I’m gonna copyright that.

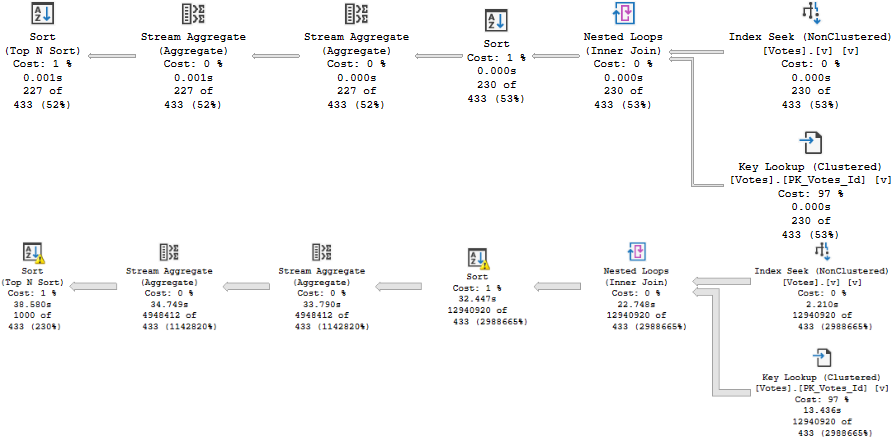

Most parameter sniffing will result in a plan with a set of bad choices for different amounts of data, which will result in something like this:

This isn’t a “bad estimate” — it was a pretty good estimate for the first parameter value. It just wasn’t a good estimate for the second parameter value.

And to be honest, quite a bit of parameter sniffing issues come from Nested Loops. Not because it’s bad, but because it’s bad for large amount of data, especially in a serial plan. It’s a pretty easy way to gum up a query, though. Make it get stuck in a loop for 13 million rows. It wasn’t fast? No kidding. Poof, be gone.

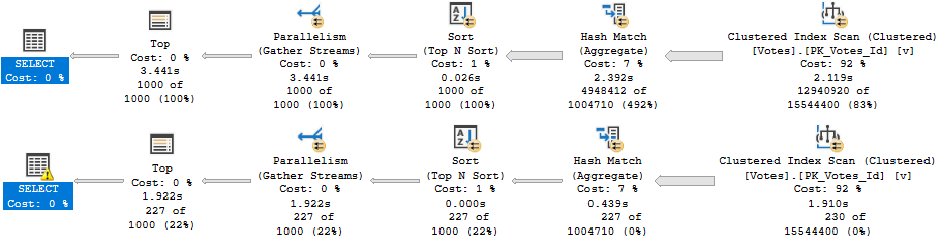

But then opposite-land isn’t good, either.

This plan probably makes plenty of sense for a big chunk of data. One big scan, one big hash, one big sort. Done.

Of course, for a small amount of data, we go from taking 1ms to taking 2s. Small amount of data people will likely not be happy with that. Your server might not be either, what with all the extra CPU resources we’re using in this here parallel plan all the time now.

Tomorrow, we’ll look at how sometimes you can fix parameter sniffing with better indexes.

Thanks for reading!

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. I’m offering a 75% discount to my blog readers if you click from here. I’m also available for consulting if you just don’t have time for that and need to solve performance problems quickly.

Related Posts

- Starting SQL: Fixing Parameter Sensitivity Problems With SQL Server Queries

- Starting SQL: Why SQL Server’s Optimizer Doesn’t Always Choose The Same Index

- SQL Server 2022 CTP 2.1 Improvements To Parameter Sensitive Plan Optimization

- SQL Server 2022 Parameter Sensitive Plan Optimization: Does Not Care To Fix Your Local Variable Problems