Hidden Away

If one dare go looking, a list of Microsoft Approved™ solutions for dealing with last page contention exists.

I’m going to walk through my experiences with them in different scenarios, so you get a better understanding of which ones work best, and which ones can be tough to implement.

Keep a few things in mind, though:

- Since 2019 adoption is pretty low so far, I’m not including optimize for sequential key, even though I think it’s the best overall solution. It’s minimally invasive, doesn’t require changing indexes, key columns, or column order, and doesn’t require a rebuild to enable. If I had to pick my favorite, this’d be it. Good job all around, there.

- None of the previous solutions are exactly friendly to big tables if you need to make the changes in place, and are endlessly complicated by other features and paradigms. Foreign keys? Drop those first. AG? Put it in async. Features that rely on Primary Keys? Turn off, pray you can turn back on. The list is extensive and ever-growing.

- In non-Enterprise versions of SQL Server, where there are no ONLINE options for changing indexes, you’ll probably need to ask for outage windows to avoid blocking when making in-place changes. Though I typically find offline changes run faster, they’re far less friendly to concurrency.

Example Table

But anyway, let’s review these things. That’s what we’re here to do.

This is the table we’re going to be using as an example, which I’ve inflated a bit to have ~200 million rows.

CREATE TABLE dbo.VotesBig

(

Id INT IDENTITY,

PostId INT NOT NULL,

UserId INT NULL,

BountyAmount INT NULL,

VoteTypeId INT NOT NULL,

CreationDate DATETIME NOT NULL,

CONSTRAINT vb_pk_id

PRIMARY KEY CLUSTERED ( Id ASC )

);

Method 1

Make the column that contains sequential values a nonclustered index, and then move the clustered index to another column. For example, for a primary key on an identity column, remove the clustered primary key, and then re-create it as a nonclustered primary key.

This sounds just dandy, minus the above caveats about changing keys around. Using the VotesBig table as an example, you could either use PostId or UserId as the new non-unique clustered index key. In the public release of the Stack Overflow database, the UserId column is largely scrubbed out, so I’ll be using PostId in my example.

In real life I’d opt for UserId, which would be far less likely to have hot spots towards the end of the index, where new questions and answers with higher Ids are likely to be attracting insert activity from voting.

I gotta be honest, though: every time I’ve tried this method, I’ve ended up with contention on any index that leads with the identity column. Perhaps not as drastic, but still there. Wherever you have ever-increasing keys, you run that risk, and if you’re hitting the issue sometimes just shifting it to another index isn’t sufficient.

If you’re going to use this method, please please please think about dropping nonclustered indexes first, and adding them back after you create the clustered index. This avoids having to modify them to disinclude, and then include the clustered index key columns. They really do get in the way when removing and re-adding clustered indexes.

...Drop Nonclustered Indexes First... ALTER TABLE dbo.VotesBig DROP CONSTRAINT vb_pk_id; CREATE CLUSTERED INDEX cx_id ON dbo.VotesBig(PostId); ALTER TABLE dbo.VotesBig ADD CONSTRAINT vb_pk_postid PRIMARY KEY NONCLUSTERED(Id);

Method 2

Reorder the clustered index definition in such a way that the leading column isn’t the sequential column. This requires that the clustered index be a composite index. For example, in a customer table, you can make a CustomerLastName column be the leading column, followed by the CustomerID. We recommend that you thoroughly test this method to make sure that it meets performance requirements.

Okay, this is slightly less confusing. But hell, you better hope you don’t have queries relying on seeking to “CustomerID” if you do this (at least without also searching on “CustomerLastName” with an equality, too). Just like above, yeah, you could create another index to satisfy them by leading with “CustomerID”, but you may find yourself dealing with contention on that index now.

I’ve also seen this cause issues with foreign keys where you may no longer have a single column candidate key.

Not that I like foreign keys all that much. They’re sort of dreary in SQL Server. They don’t really help with cardinality estimation, and join elimination is a farce, largely. One of my favorite recent clients had columns named “_FK” to denote relationships, without any actual foreign keys to back them up. They were just indexed properly.

Shocking, I know.

...Drop Nonclustered Indexes First...

ALTER TABLE dbo.VotesBig

ADD CONSTRAINT vb_pk_id PRIMARY KEY CLUSTERED(PostId, Id);

Anyway, this is a somewhat dangerous option without careful testing and domain knowledge about the way the table is queried and referenced.

Method 3

Add a nonsequential hash value as the leading index key. This will also spread out the inserts. A hash value could be generated as a modulo that matches the number of CPUs on the system. For example, on a 16-CPU system, you can use a modulo of 16. This method spreads out the INSERT operations uniformly against multiple database pages.

Well, well, well. This is fun. It’s a lot like the above option, except you have to add a column with no business logic whatsoever, and no hope of being used by queries as a predicate. That puts you in an extra tough spot if you need to search on “CustomerID” often for the same reasons as above. You change this part, then you add the nonclustered index, you end up with contention on the nonclustered index. Yes, I do feel like I have to keep saying that.

Suddenly, upgrading to 2019 seems like less of a headache.

Anyway, there are some shortcuts you can take that the Microsoft post doesn’t mention. For this example, you don’t need a persisted not null computed column.

Adding a column with that definition would be quite painful for a big table.

For you.

...Drop Nonclustered Indexes First...

ALTER TABLE dbo.VotesBig

ADD HashValue AS ISNULL(CONVERT(TINYINT, ABS(Id) % 16), 0);

ALTER TABLE dbo.VotesBig

ADD CONSTRAINT vb_pk_hv_id

PRIMARY KEY CLUSTERED ( HashValue, Id );

You can use the ISNULL function to mark the column as not-nullable, and it doesn’t need to be persisted to be used in the Primary Key. It will need to be persisted to use in a later example with partitioning that uses a similar technique as this, but with an upside missing here around index key order.

One thing I’ve had some success with using this method is changing the modulus math to match the number of cores in a NUMA node. It’s certainly something to mess with, but nothing to get hung up on if performance is acceptable with the first number you choose.

Method 4

Use a GUID as the leading key column of an index to ensure the uniform distribution of inserts.

Note Although it achieves the goal, we don’t recommend this method because it presents multiple challenges, including a large index key, frequent page splits, low page density, and so on.

Hey, it’s hard to run out of GUIDs. You’ll never have to worry about doing a BIGGUID conversion.

But still, yeah. I’d have a tough time going for this one, given that like above, the GUID would have no searchable meaning, and on top of that all your nonclustered indexes would inherit the GUID, and… man. Usually I make fun of people who obsess about page splits, but I think I’d join them here.

You might even talk me into crazy things like “adjusting fill factor” and “doing regular index maintenance” with this setup.

Now I’ve had to go and get drunk and the rest of this post will be terribly unreliable.

Method 5

Use table partitioning and a computed column that has a hash value to spread out the INSERT operations. Because this method uses table partitioning, it’s usable only on Enterprise editions of SQL Server. Note You can use Partitioned tables in SQL Server 2016 SP1 Standard Edition. For more information, see the description of “Table and index partitioning” in the article Editions and supported features of SQL Server 2016.

But okay, hey. We have to add a computed column, and then we have to partition by it. But I promised you that something would be different here, right?

First things first, this part sucks on big tables. This runs for an hour on my laptop, which has 8 3GHz cores and 64GB of RAM.

...Drop Nonclustered Indexes First... ALTER TABLE dbo.VotesBig ADD HashValue AS ISNULL(CONVERT(TINYINT, ABS(Id) % 16), 0) PERSISTED;

The PERISTED keyword here means we have to go writing this thing to every darn page. What a drag it is getting persisted.

Then we have to create our partition function and scheme. Just don’t try to rename them. Because you can’t. Ha ha ha.

CREATE PARTITION FUNCTION pf_hash (TINYINT) AS RANGE LEFT FOR VALUES (0,1,2,3,4,5,6,7,8,9,10,11,12,13,14,15); CREATE PARTITION SCHEME ps_hash AS PARTITION pf_hash ALL TO ([PRIMARY])

In the long run, though this can be worth it, because you can do this.

ALTER TABLE dbo.VotesBig

ADD CONSTRAINT vb_pk_hv_id

PRIMARY KEY CLUSTERED ( Id, HashValue ) ON ps_hash(HashID);

Your index keeps the more valuable column for queries up front, but still partitions by the hash value, and achieves reducing last page contention.

I experimented with using a Sequence instead of hashing an existing column, but didn’t find it any less painful to put in place.

M.E.T.H.O.D. MAN

Sequences?

It’s possible to replace modulus math on an identity column with a sequence that resets every N values, e.g.

CREATE SEQUENCE dbo.vote_sequence AS TINYINT START WITH 1 INCREMENT BY 1 MINVALUE 1 MAXVALUE 16 CYCLE CACHE 16;

But I don’t think you’d gain much, and you’d just be introducing another dependency. It also doesn’t buy you anything when altering the table, because the column definition would have to have the sequence as a default to fetch the next value from it.

Partitioned Views?

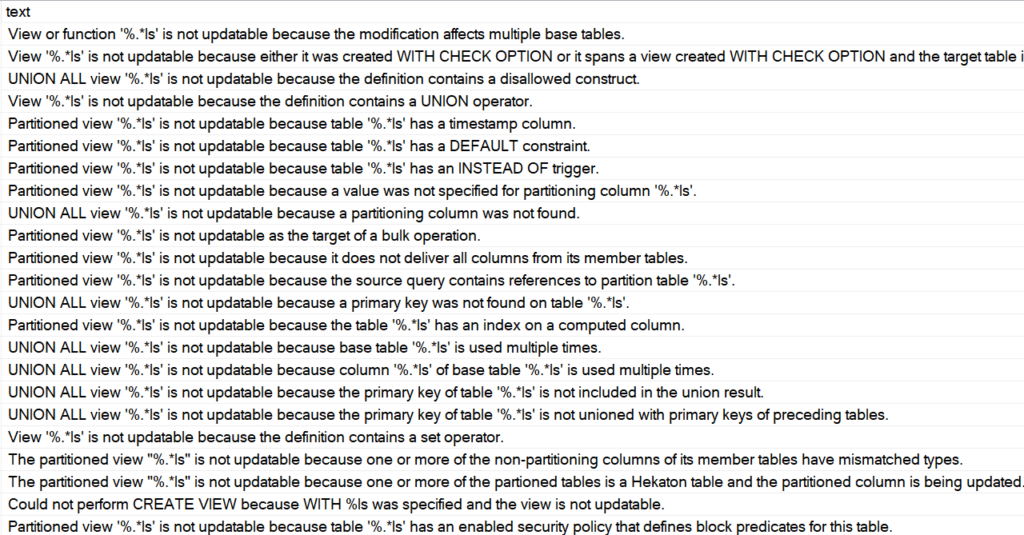

Perhaps conspicuously missing from this post is talk about partitioned views.

As much as I love them, there are an absolutely insane amount of rules to get them to be updatable through the view name.

That’s an attractive ability if you’re keen on making as few code changes as possible, but in practice…

They’re only a good idea if you have a lot of control over the table definitions and code, and have a heck of a lot of patience.

There are a lot of gotchas up front around identity columns, primary keys, and a bunch of other stuff.

If you can control the code to point modifications to the right tables, or to the newest table for inserts, they’re potentially an easier-to-manage option that table partitioning.

Why? Because you can define the “new” table with whatever method you’d like to avoid last page contention, and since it’s effectively an empty partition, the intrusive changes aren’t an issue anymore.

Thanks for reading!

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. I’m offering a 75% discount to my blog readers if you click from here. I’m also available for consulting if you just don’t have time for that and need to solve performance problems quickly.

hi Eric,

I prefer unique indexes, so that SQL Server does not have to have a Nullable Block in each index.

So I tried to modify your SQL to:

ALTER TABLE dbo.Votes DROP CONSTRAINT vb_pk_postid;

CREATE unique CLUSTERED INDEX cx_id ON dbo.Votes(PostId, id) with (drop_existing=on);

ALTER TABLE dbo.Votes ADD CONSTRAINT vb_pk_postid PRIMARY KEY NONCLUSTERED(Id);

and it turns out that the table is actually smaller than yours.

I thought it might have been bigger, but it would catch-up when more non-clustered indexes were added.

Will this approach also solve your problem on last-page contention?

Flat out, no. It doesn’t sound like you spent much time trying to understand the problem, here.

I’m also not sure how a unique indexes changes anything with nullability, e.g.

CREATE TABLE dbo.t (id INT NULL);CREATE UNIQUE CLUSTERED INDEX c ON dbo.t(id);

INSERT dbo.t (id)

VALUES (NULL);

@Eric

I have a question about Method 4 (GUID)

Actually, a Sequential FUID is not that bad for page splits as just GUID

For an instance, some metrics

https://www.codeproject.com/articles/32597/performance-comparison-identity-x-newid-x-newseque

I don’t really see a question here, but if you use a sequential GUID you’re not going to solve the last page contention problem that this post is about.

Thanks!